On February 23, 2026, the American AI company Anthropic made a serious accusation. It claimed that three Chinese AI laboratories had conducted large-scale attacks against its technology. The companies named were DeepSeek, Moonshot AI, and MiniMax. According to Anthropic, these labs used a method called distillation to copy its AI model's abilities. The operation reportedly involved over 16 million exchanges through around 24,000 fake accounts.

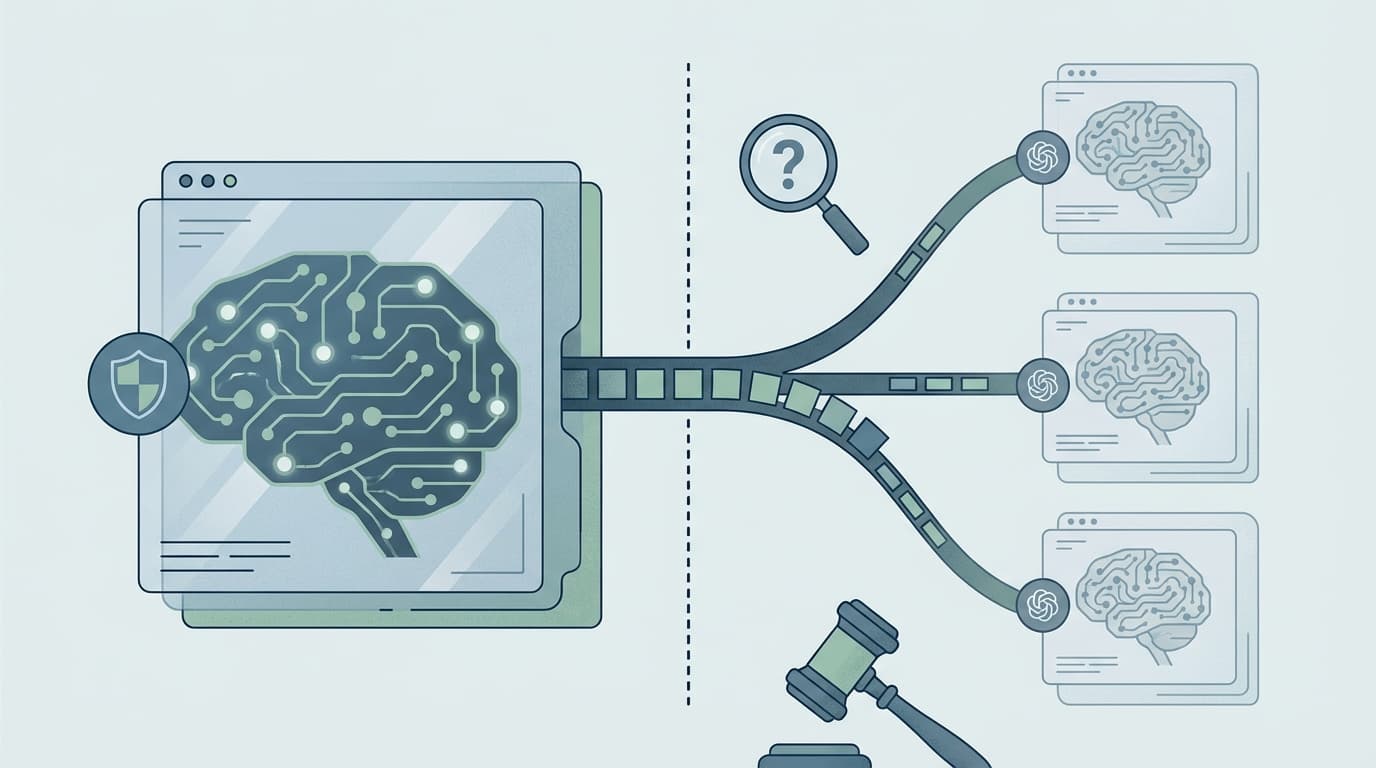

Distillation is a technique where a weaker AI model learns from a stronger one. It is legitimate when companies use it on their own models. However, Anthropic argues it becomes illicit when competitors use it to steal capabilities. MiniMax was responsible for over 13 million exchanges targeting coding and tool use. Moonshot AI generated more than 3.4 million exchanges focused on reasoning and computer vision. DeepSeek conducted over 150,000 exchanges aimed at reasoning and avoiding political censorship.

Anthropic identified the campaigns through careful analysis of account activity. The company tracked patterns in data such as shared payment methods and coordinated timing. Attackers used networks of fraudulent accounts that Anthropic calls hydra clusters. If one account was banned, another would immediately replace it. Some accounts were even linked to the public profiles of senior laboratory staff.

This case has significant implications for national security and global AI competition. Anthropic warns that models built through illicit distillation often lack important safety safeguards. Without these protections, dangerous capabilities could spread to harmful actors around the world. The company believes this situation reinforces the need for stronger export controls on AI technology. Experts say that preventing such attacks will require cooperation across the entire AI industry.